&imagePreview=1

&imagePreview=1

Written by Luca Csanády / Posted at 8/25/22

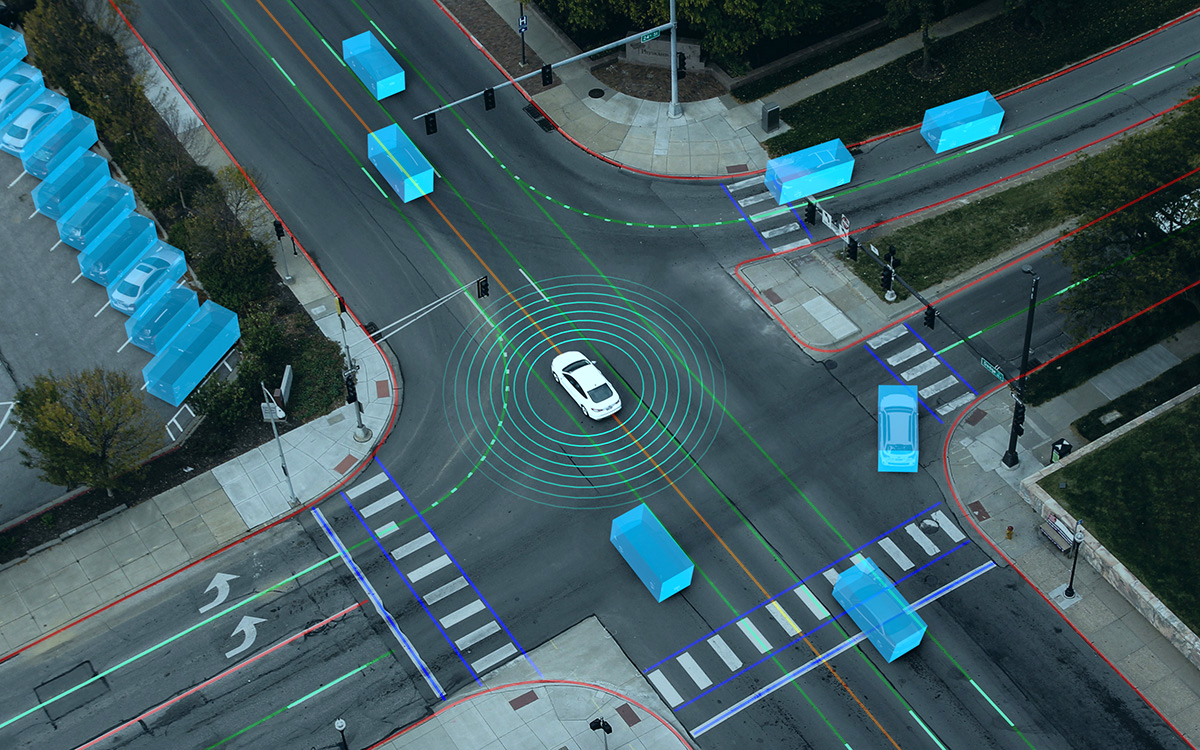

Auto annotation: cost-efficient, fast and adaptive

Annotation is the most expensive element of training data generation, so truly automatic annotation remains the holy grail of automated driving development – aside from the ability to generate synthetic data that is indistinguishable from real data.

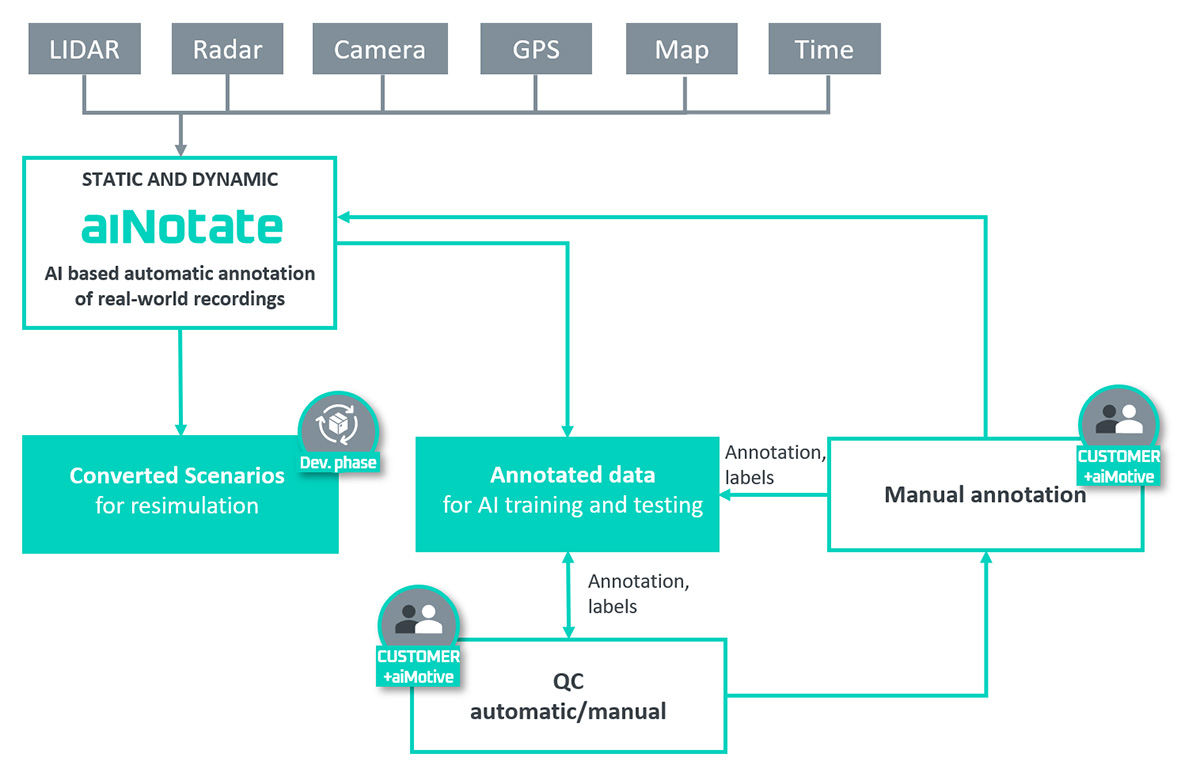

aiMotive has developed auto annotation software based on Neural Networks (NNs) as part of its aiData pipeline. It is called aiNotate.

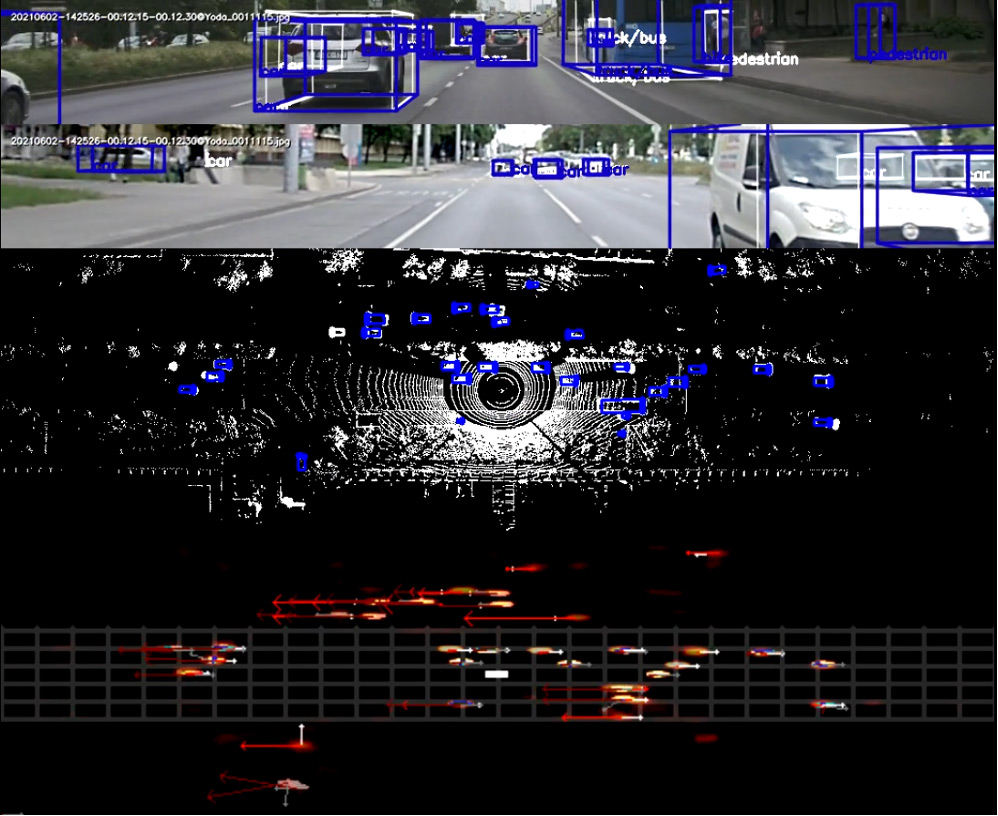

aiNotate has two parts: Static Auto Annotation (SAA), responsible for labeling the static elements of the environment such as road surface markings and traffic signs; and Dynamic Auto Annotation (DAA), responsible for labeling the dynamic elements of the environment, such as vehicles, VRUs (pedestrians, cyclists, etc.) and traffic light states.

Static Auto Annotation

The principle behind SAA is overlaying HD maps onto the recorded sensor data using high-precision localization. Using this technique, you can harvest training data from the selected sections indefinitely, under various weather, lighting, and traffic conditions. aiMotive’s SAA solution works with various 3rd party HD maps, but the company also utilizes tools and workflows internally to produce suitable HD maps if needed. The precision of this method is 100%, so no quality checking is required, assuming the HD map is correct and up to date.

Meanwhile, aiMotive is also developing a static auto annotation method that does not rely on precise HD maps but on a trainable neural network which will be even more scalable than the current solution.

Dynamic Auto Annotation

DAA is based on a similar network as aiDrive’s perception network, MS2N (Multi-Sensor Model-Space Neural Network), but it is not bound by any real-time constraints or HW performance limitations. We call it SuperMS2N. It works by automatically detecting objects of interest using a combination of Lidar, camera, and radar data. It uses an ensemble of classical and AI-based methods to produce 3D bounding boxes around objects and categorize them, which can be used to train a model-space neural network for object detection, or, when used in combination with SAA, a multi-headed network that can perform both road/lane and object detection, just like the original MS2N.

Benefits

The output of aiNotate can be used for several purposes, such as training or validation data for NNs, or input for recreating real-world scenarios in simulations.

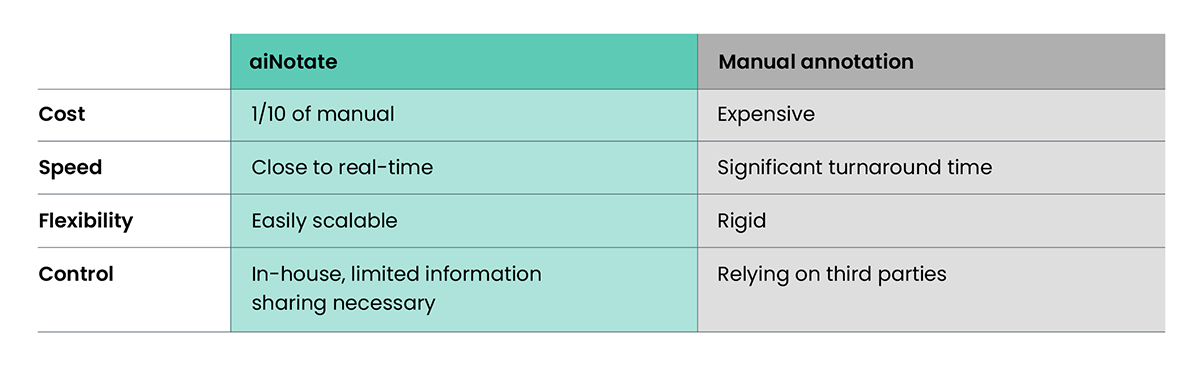

There are multiple key advantages to using aiNotate compared to manual annotation.

Firstly, cost-efficiency. Automatic annotation with aiNotate is approximately one tenth the cost of manual annotation. Secondly, speed. As aiNotate is scalable to multiple GPUs and large servers, data can be annotated within a couple of hours of being recorded.

And most importantly, its adaptability. Automated driving is a moving target: with aiNotate huge amounts of data (thousands of hours) can be relabeled according to any new annotation requirements in days, without having to hire or retrain a whole team of annotators.

An important attribute of aiNotate is that since it is NN-based, its performance can always be further improved, and its capabilities expanded by training it with additional data. For instance, when quality checking reveals that some scenarios have been annotated incorrectly by aiNotate, those recordings can be reannotated manually, then used to retrain aiNotate so that it won’t make the same mistakes again. Thus, manually annotated data can be reused in similar subsequent situations during auto annotation.

We do not think that manual annotation can be completely replaced yet, but aiNotate can help you minimize the need for manual work and save significant costs, even taking the cost of quality checking into account.

Outlook

Annotating data automatically can save you a huge amount of money and time in automated driving development. In our next blog post, we will introduce aiMotive’s synthetic data generation tool which, among its other uses, can help you to complement real-world data with scenes and scenarios that are very costly or dangerous to record on the road.